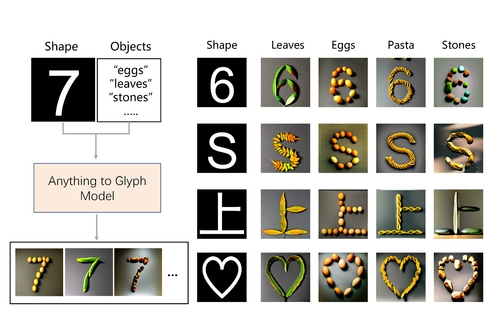

Anything to Glyph: Artistic Font Synthesis via Text-to-Image Diffusion Model

DescriptionThe automatic generation of artistic fonts is a challenging task that attracts many research interests. Previous methods specifically focus on glyph or texture style transfer. However, we often come across creative fonts composed of objects in posters or logos. These fonts have proven to be a challenge for existing methods as they struggle to generate similar designs. This paper proposes a novel method for generating creative artistic fonts using a pre-trained text-to-image diffusion model. Our model takes a shape image and a prompt describing an object as input and generates an artistic glyph image consisting of such objects. Specifically, we introduce a novel heatmap-based weak position constraint method to guide the positioning of objects in the generated image, and we also propose the Latent Space Semantic Augmentation Module that improves other information while constraining object position. Our approach is unique in that it can preserve the object's original shape while constraining its position. And our training method requires only a small quantity of generated data, making it an efficient unsupervised learning approach. Experimental results demonstrate that our method can generate various glyphs, including Chinese, English, Japanese, and symbols, using different objects. We also conducted qualitative and quantitative comparisons with various position control methods for the diffusion model. The results indicate that our approach outperforms other methods in terms of visual quality, innovation, and user evaluation.

Event Type

Technical Papers

TimeTuesday, 12 December 20239:30am - 12:45pm

LocationDarling Harbour Theatre, Level 2 (Convention Centre)