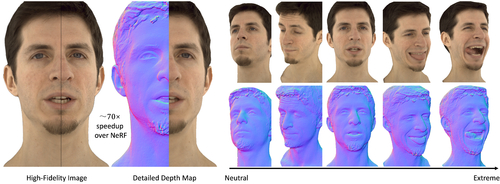

Neural Point-based Volumetric Avatar: Surface-guided Neural Points for Efficient and Photorealistic Volumetric Head Avatar

DescriptionRendering photo-realistic and vividly moving human heads is very important for pleasant and immersive experience in AR/VR and video conferencing. However, existing methods usually struggle to model challenging facial regions (e.g., mouth interior, eyes, hair/beard), resulting in unrealistic and blurry results. In this paper, we propose Neural Point-based Volumetric Avatar (NPVA), which discards predefined connectivity and hard correspondence imposed by mesh-based methods (i.e. neural points) and adopts neural volume rendering. Specifically, the neural points are constrained around the surface of the target expression via a high-resolution UV displacement map, achieving increased modeling capacity and more accurate control. We propose three technical innovations to improve the rendering and training efficiency, including a patch-wise depth-guided (shading point) sampling strategy, a lightweight radiance decoding process, and a Grid-Error-Patch (GEP) ray sampling strategy during training. By design, our NPVA can better handle topologically changing regions and thin structures, and can be animated with accurate expression control.

Event Type

Technical Papers

TimeTuesday, 12 December 20239:30am - 12:45pm

LocationDarling Harbour Theatre, Level 2 (Convention Centre)