Intrinsic Harmonization for Illumination-Aware Image Compositing

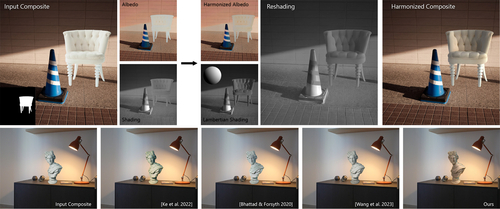

DescriptionDespite significant advancements in network-based image harmonization techniques, there still exists a domain gap between training pairs and real-world composites encountered during inference. Most existing methods are trained to reverse global edits made on segmented image regions, which fail to accurately capture the lighting inconsistencies between the foreground and background commonly found in composited images. In this work, we introduce a self-supervised illumination harmonization approach formulated in the intrinsic image domain. First, we estimate a simple global lighting model from mid-level vision representations to generate a rough shading for the foreground region. A network then refines this inferred shading to generate a harmonious re-shading that aligns with the background scene. In order to match the color appearance of the foreground and background, we utilize ideas from prior harmonization approaches to perform global image edits in the albedo domain. To validate the effectiveness of our approach, we present results on challenging real-world composites and conduct a user study to objectively measure the enhanced realism achieved compared to state-of-the-art harmonization methods.

Event Type

Technical Papers

TimeTuesday, 12 December 20239:30am - 12:45pm

LocationDarling Harbour Theatre, Level 2 (Convention Centre)