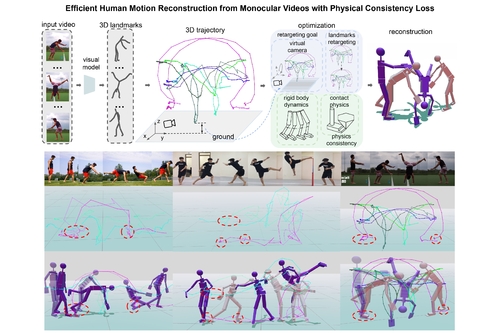

Efficient Human Motion Reconstruction from Monocular Videos with Physical Consistency Loss

DescriptionVision-only motion reconstruction from monocular videos often produces artifacts such as foot sliding and jittery motions. Existing physics-based methods typically either simplify the problem to focus solely on feet-ground contacts, or reconstruct full-body contacts within a physics simulator, necessitating the solution of a time-consuming bilevel optimization problem.

To overcome these limitations, we present an efficient gradient-based method for reconstructing complex human motions (including highly dynamic and acrobatic movements) with physical constraints. Our approach reformulates human motion dynamics through a differentiable physical consistency loss within an augmented search space that accounts both for contacts and the camera alignment. This enables us to transform the motion reconstruction task into a single-level trajectory optimization problem. Experimental results demonstrate that our method can reconstruct complex human motions from real-world videos in minutes, which is substantially faster than previous approaches. Additionally, the reconstructed results show enhanced physical realism compared to existing methods.

To overcome these limitations, we present an efficient gradient-based method for reconstructing complex human motions (including highly dynamic and acrobatic movements) with physical constraints. Our approach reformulates human motion dynamics through a differentiable physical consistency loss within an augmented search space that accounts both for contacts and the camera alignment. This enables us to transform the motion reconstruction task into a single-level trajectory optimization problem. Experimental results demonstrate that our method can reconstruct complex human motions from real-world videos in minutes, which is substantially faster than previous approaches. Additionally, the reconstructed results show enhanced physical realism compared to existing methods.

Event Type

Technical Papers

TimeTuesday, 12 December 20239:30am - 12:45pm

LocationDarling Harbour Theatre, Level 2 (Convention Centre)