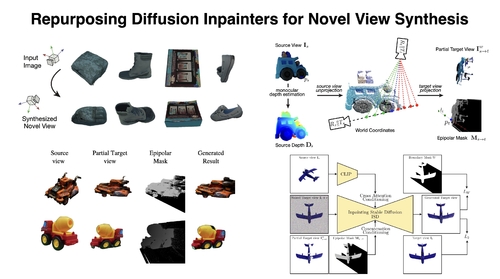

Repurposing Diffusion Inpainters for Novel View Synthesis

DescriptionIn this paper, we present a method for generating consistent novel views from a single source image. Our approach focuses on maximizing the reuse of visible pixels from the source view. To achieve this, we use a monocular depth estimator that transfers visible pixels from the source view to the target view. Additionally, we leverage a learned object shape prior obtained through 2D inpainting diffusion. We also introduce a dedicated fine-tuning technique using diffusion and a novel masking mechanism based on epipolar lines to further improve the quality of our approach. We train our method on the large-scale Objaverse dataset. This allows our framework to perform zero-shot novel view synthesis on a variety of objects. We evaluate the zero-shot abilities of our framework on three challenging datasets: Google Scanned Objects, Ray Traced Multiview, and Common Objects in 3D.

Event Type

Technical Papers

TimeTuesday, 12 December 20239:30am - 12:45pm

LocationDarling Harbour Theatre, Level 2 (Convention Centre)